Introduction

The author of the ebook has lots of past expertise with ActiveMQ (and Camel) and recently started working on the Kafka project. The ebook gives his perspectives on both these message brokers, by discussing each one in turn:

ActiveMQ

As the author explains, a lot of the ActiveMQ architecture was dictated by the JMS specification. The power of the JMS specification is a standard way of interfacing with message brokers - and for this reason JMS has been nominated as one of the best Java enterprise APIs.

A big part of JMS is concerned about reliable, persistent messaging without putting too much burden on the messaging clients. For instance, it is the message broker's responsibility to keep messages until the consumers have processed them. This makes the broker a bit more complex, and (at least for ActiveMQ) this makes performance degrade as the number of consumers goes up.

Consequently, JMS message brokers like ActiveMQ are less suited for the "universal data pipeline" pattern - in fact it's considered an anti-pattern for the JMS broker architecture. And because the broker keeps messages for the consumers, too many consumers that go offline can consume a bit more disk space (if this makes you think Kafka uses less disk space: read on!). Note that many JMS brokers allow setting a message lifetime, after which unconsumed messages can be discarded - so in practice the problem may not be as big as you might expect.

Kafka

The design goal of Kafka was (reportedly) to enable the "universal data pipeline" at LinkedIn. This required a different broker - because it's an anti-pattern in JMS for the reasons explained above. So where JMS and ActiveMQ are tuned for reliable persistent messaging (and therefore can't support data pipelines very well), Kafka's design focuses on exactly these data pipelines. It does offer persistence, but it's not as guaranteed as with JMS-based brokers.

To achieve its goals, Kafka uses a "unified destination model" called "topic" (something in between the notion of a JMS topic and a JMS queue). Messages are kept for a while (and can be consumed more than once via resettable pointers if desired). However, message persistence is limited in time and it is the consumer's responsibility to consume relevant messages before the broker deletes them. So: messages can be lost (in addition to being delivered more than once).

Overall, the design of the Kafka broker increases disk usage by as much as 1000 times what ActiveMQ (or JMS brokers) need, without any guarantees for consumers that fail to pick up their messages before the broker decides to delete them. However, it's really fast and can handle up to millions of messages per second.

Author's note: I found several discussions suggesting that persistence is not guaranteed in Kafka because it does not immediately flush to disk. If this is true, then there is actually no persistence guarantee at all - only best-effort. This probably explains why it can be very fast - at the cost of possible message loss.Conclusions

Our take

Whereas the book claims that exactly-once delivery does not exist, we have shown how easy it is in this article. That is: it's easy if you use JMS/XA capable brokers like ActiveMQ. In the case of Kafka, it's quite different: because Kafka delegates the burden of exactly-once to the message consumer, you're bound to encounter the pitfalls of the idempotent consumer. Message loss is also possible. Needless to say, XA is not supported by Kafka.We recommend using Kafka for higher-performance monitoring use cases where message loss is not important, such as diagnostic logging events, performance metrics, or other statistical event types. But if you care about exactly-once delivery, if your messages are valuable or if you don't need a "universal data pipeline" then it's best to stick to a classical broker like ActiveMQ.

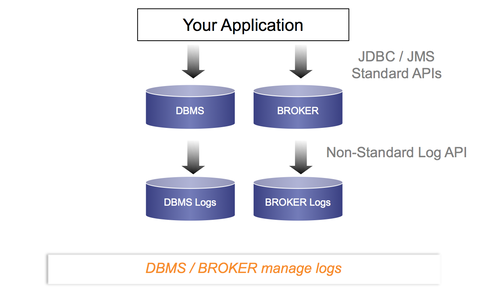

Without Kafka

Without Kafka, you benefit from the infrastructure logic offered by your DBMS and/or message broker:

For regular applications, this is probably what you want.

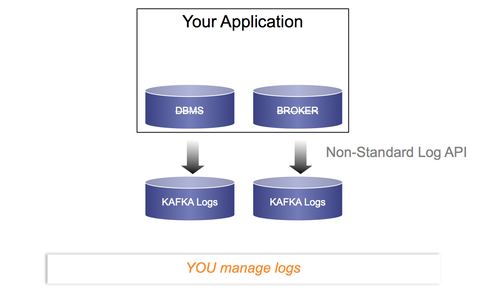

With Kafka

With Kafka you only have log files, meaning you have to do everything else yourself in your code:

If you're LinkedIn or any other Internet giant then this may be acceptable to you. Alternatively, if managing log files is your thing then this is what you want. In all other cases, you may want to stick to what is out there already...

Next steps?

Download now to start exactly-once processing with ActiveMQ and XA:

FREE download: TransactionsEssentials (Includes working samples that use ActiveMQ for exactly-once delivery)

Comments

Add a comment